Author: Andrés Rieznik

Senior Researcher

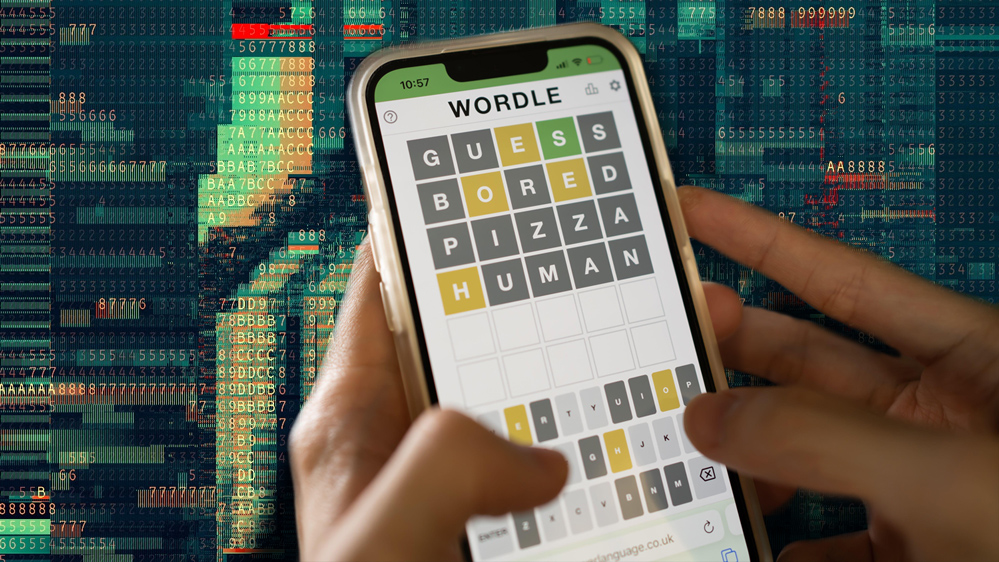

Wordle and hackers behavior

The game "Wordle" went viral on the internet last month, and if you arrived at this article, you are probably familiar with it. Interestingly, when you play it, your mindset is similar to that of a scientific researcher.

Any researcher wants to maximize the information obtained when running an experiment, and this is the object of Wordle. For example, when you try your first guess, your goal is likely not to hit the correct word (since the chances are so low given that there are thousands of 5-letters words that exist) but instead to obtain a result that will enable you to discard the maximum number of words so your next guess will be against a vastly reduced set of words.

For instance, the word "fuzzy" is a bad choice as a first guess. The correct word will most likely not contain any of these letters, and if that is the result, you can discard any 5-letter words with an “f” or a “z” which are a small percentage of the possible words. On the other hand, you know that “stone” would be a better choice. How do you know that? Consciously or not, you realize that this guess will probably enable you to rule out a much larger set of words. Knowing that the target word contains the letters “s” or “e” allows you to discard a large number of words and will then result in the next guess having a reduced set of options.

Similarly, a researcher is trying to maximize the information obtained from an experiment. They will try to design an experiment that enables them to maximize their chances of reducing the possible set of models that explain the reality they want to understand. This is how information is quantified: your information is as informative as the number of possible results you can discard from receiving it.

As an exercise, imagine you have two guesses remaining, and there are only three possible words, all of them equally probable: "stock," "range," and "river." Is there a best choice? Take a second to think about it. The answer is that you should choose "range" or "river," but not "stock." Why? Because if your guess is not correct, when you select "stock," you will, in the second guess, still need to choose between "range" or "river," while, if you select one of these last two words, even if you do not win in the first guess you will in the second since you will know if the correct word starts with the letter "r," allowing you to eliminate either "stock" or the other remaining word.

What does this have to do with hacker behavior?

Understanding hackers' behavior is a costly process and requires careful design to test hypotheses while maximizing statistical power. So, when we design an experiment at the BitTrap Attacker Behavioral Labs, we run algorithms that implement Adaptive Design Optimization (ADO), choosing questions that maximize information gain considering previous answers [Yang, 2020; Chang, 2021]. Which is precisely what you intuitively do when playing Wordle.

Of course, to implement this idea of what is the most informative design for an experiment requires a vast amount of computation. Apart from a mathematically rigorous way of computing both: all the models (or words in Wordle) that can be discarded from the result of an experiment and the probability of each result.

The details of this computation are too complex to be addressed here. Still, you can have an intuitive idea of what is going on when we calculate the amount of information of a given experimental design by playing with the box below, where you can calculate each word's expected amount of ‘information value’ among a set of words when playing Wordle. After you press the “submit” button, you’ll find out the expected information gain of each word and how they rank against each other.

Returning to our example where the universe of words is restricted to "stock," "range," and "river," you can observe that "stock" has an expected information value of 1.52 while "range" and "river" have 1.58. Therefore, these last two words have a higher information value.

Wordle and hackers behavior

Write or paste your text here. The field accept only 5 characters words

Go ahead and play: write in the box your list of five-letters words, press enter, and we will return the expected amount of information for each one. If you play Wordle only with your chosen list, your first guess should be the one with the greatest expected information value. If you want to know the details of our algorithm, you can visit our Google Collab page here.

Moreover, differently from what happens in Wordle, where each guess has the same cost (probably only by the time you think of the word), different trials may have different associated costs in a natural experiment.

For instance, one of the main goals we have in the BitTrap Attacker Behavioral Labs is to know the answer to a single question: once an attacker was successful in trespassing a system, and when the hacker is interested in monetizing his hack, would he instead secure a bounty from his victim in exchange for revealing the hack rather than continue looking for a potential reward? What would be the minimum amount he would be willing to accept?

At the Bittrap Lab, we conduct experiments using vulnerable and Bittrap-protected endpoints in the cloud with different reward values. The entire process consists in deploying these endpoints with varying values of reward. These values are controlled by an AI algorithm we implemented that considers prior results and chooses the candidate reward with the higher informative value per unit cost to continue. In this article, we explained how our AI works.

In conclusion, when we play Wordle our mindset is similar to that of a scientific researcher: consciously or not, we are trying to maximize the expected information gain we will obtain from each trial. We do so by using our natural neural brain. Scientific researchers, on the other hand, apply IA algorithms in powerful computers trying to improve their measures from a previous iteration. The spirit of the task, in both cases, remains the same.

References

Chang, J., Kim, J., Zhang, B. T., Pitt, M. A., & Myung, J. I. (2021). Data-driven experimental

design and model development using Gaussian process with active learning. Cognitive Psychology,

125, 101360.

Yang, J., Pitt, M. A., Ahn, W. Y., & Myung, J. I. (2021). ADOpy: a python package for adaptive

design optimization. Behavior Research Methods, 53(2), 874-897.